ML for SWEs 65: The AI bubble is popping and why that's a good thing

The future of the industry and how to get the most out of your AI coding assistant

Welcome to machine learning for software engineers. Each week, I share a lesson in AI from the past week, five must-read resources to help you become a better engineer, and other interesting developments. All content is geared towards software engineers and those that like to build things.

If you find ML for SWEs helpful, please consider supporting it by becoming a paid subscriber. You'll get even more resources and interesting articles plus in-depth analysis. You can get 40% off forever if you subscribe right now:

This was a super interesting and incredibly important week for AI. Sam Altman admitted that AI is in a bubble and that people are overexcited about it. This is a huge divergence from the narrative that we've previously seen: AI can do everything.

Another really important thing that Sam Altman said is that there won't be one single AI. AI assistants are a personal thing and we'll need more than just one AI if we want AI to suit everyone. This is also a divergence from the narrative we've seen of everybody rushing toward AI because there can only be one superintelligence winner.

This is yet another example of the AI bubble shrinking. We've already seen a study that shows that 95% of companies trying to employ AI agents haven't seen the throughput that they've wanted from them. We've also seen the Amazon AWS CEO tell everyone it's foolish to think that AI will replace junior engineers.

The bubble shrinking is a very good thing for software engineers. When things are grounded in reality, that's where software engineers thrive. When building for the real world, we don't have a choice but to be faced with reality. Building things for the real world is much more difficult when the people wanting those things aren't grounded in reality.

I see this as a good thing for two primary reasons:

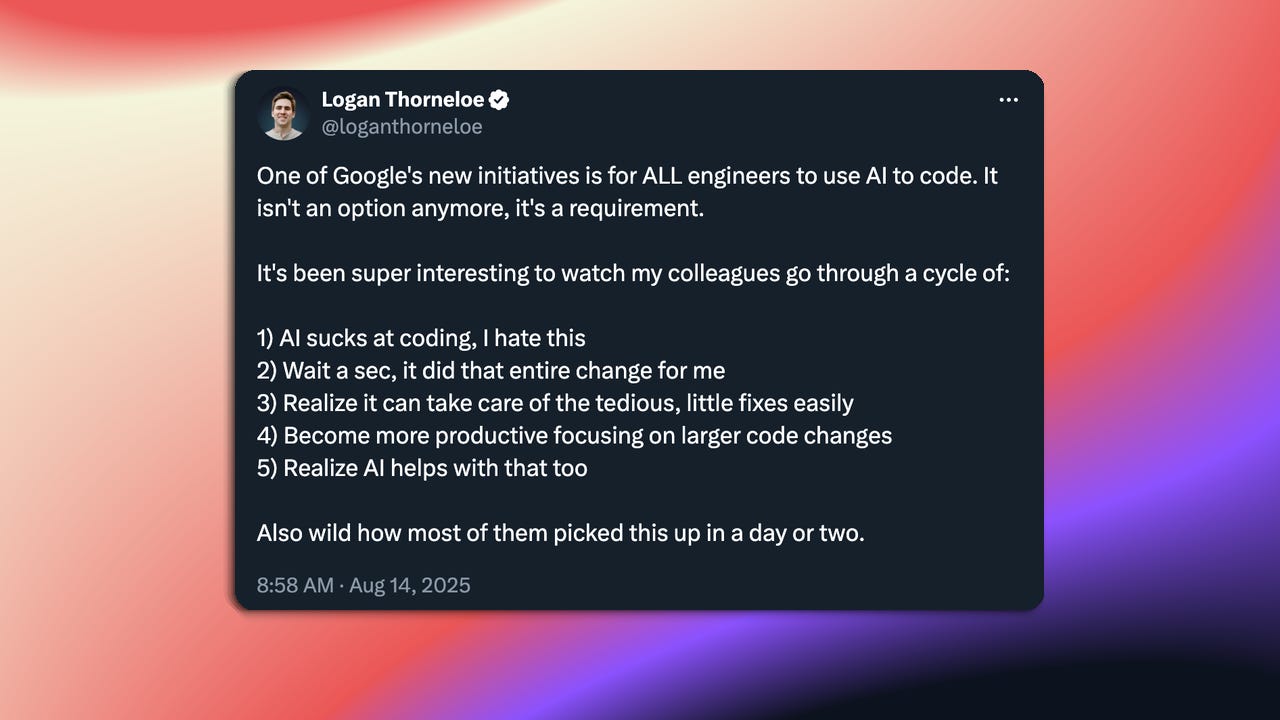

First, we'll see businesses value AI differently. Specifically pertaining to software engineering, I've seen a lot of people say their management expects them to be ten times as productive as they previously were now that they can code with AI.

While anyone who has coded with AI would have to admit it's a valuable tool, the productivity gains are far overblown. This is especially true when you consider the time it takes developers to learn how to use it properly.

Like any tool, it takes time to become acquainted with it and to learn how to use it effectively. I work with some of the most talented engineers in the industry, and even they're having a hard time adapting to using AI coding tools. There's a huge learning curve to understanding where AI coding tools work and what they don't work on.

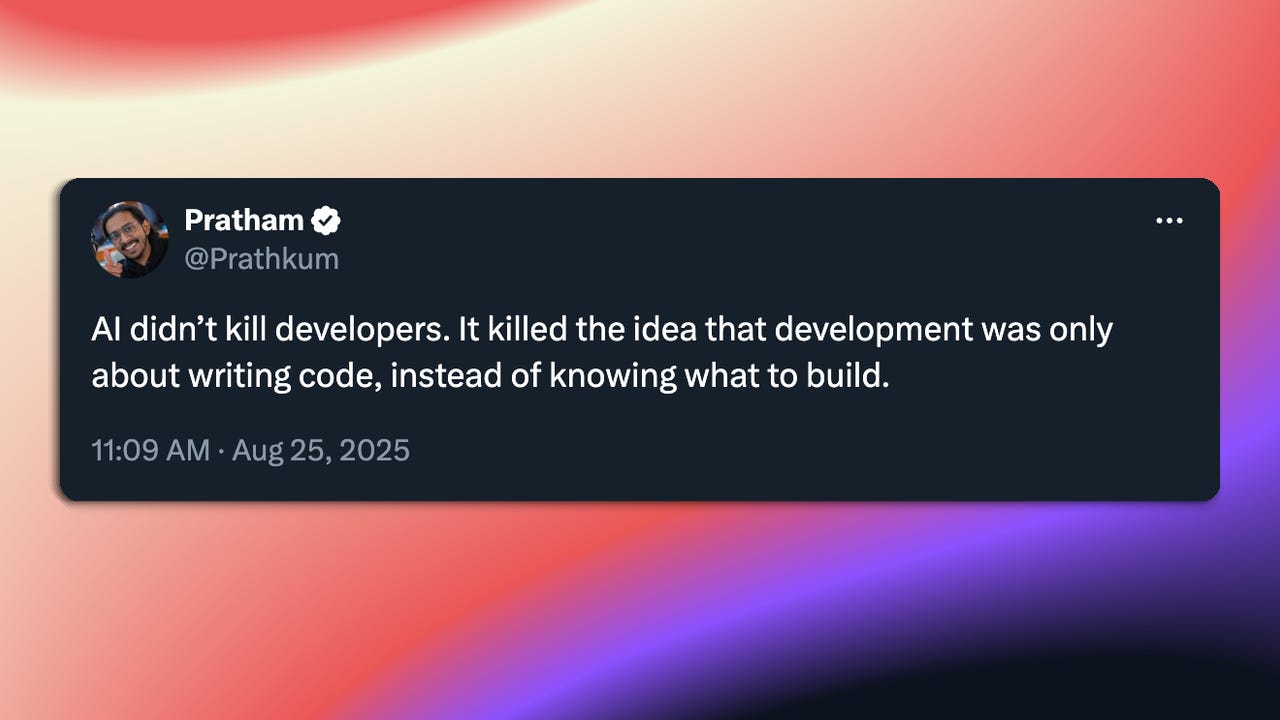

Second, the importance of the application layer is becoming more evident week after week. AI tools don't have real value just because AI exists. They have that value when the AI is applied to a real-world problem effectively. That's where software engineers come in.

Now, a few caveats about what I said above:

First, I'm not saying AI isn't a life-changing technology. It's capable of a lot and will only be capable of more going forward. But overhyping and overexciting its current capabilities and the capabilities in the near future is bad for everyone, including AI itself over the long run.

Second, I'm really hoping the AI bubble doesn't burst but that it’s grounded in reality. It's very possible this could go either way. A burst usually means people losing jobs and that’s never good.

If you want to read more about some of the realities of building AI in the real world, check out the 'Infrastructure and Energy' section below about what companies and engineers are doing to innovate under and meet the demand for the power requirements of AI.

I'm curious to know your thoughts: When everyone acknowledges we're in a bubble, the question becomes: what survives when it pops?

If you missed last week's ML for SWEs about what AI really means for software engineering jobs:

Must-reads

Hermes 4: Nous Research releases hybrid reasoners that balance performance and efficiency. These models use a

<think>tag to spend more tokens on hard problems when needed. The training dataset is 50x larger than Hermes 3, with strong performance on RefusalBench showing highest willingness to engage with controversial topics. Critical for understanding how reasoning models are evolving beyond always-on chain-of-thought.How We Reduced LLM Costs by 90% with 5 Lines of Code: A simple structural change in async Python code reduced LLM traffic and cost by 90% with no loss in functionality. The fix involved understanding Python's asynchronous behavior in Jupyter notebooks. Essential reading for anyone building LLM applications - sometimes the biggest wins come from understanding your runtime environment, not the AI itself.

Beyond sensor data: Foundation models of behavioral data from wearables: Foundation models trained on 2.5 billion hours of wearable data from 162,000 individuals achieved strong performance across 57 health tasks. The models work for both individual-level classification and time-varying health state prediction. Shows how domain-specific foundation models can unlock entirely new applications when you have the right data scale.

What makes Claude Code so damn good: Deep technical analysis of Claude Code's architecture and design decisions. The system uses prompts and tools to compensate for model weaknesses, with heavy reliance on Claude 4's interleaved thinking capabilities. Understanding how the best AI coding tools work helps you build better AI-powered systems yourself.

LLM Monitoring and Observability: Hands-on with Langfuse: Practical guide distinguishing monitoring (tracking predefined metrics) from observability (understanding internal state from external outputs). Hands-on implementation with Langfuse for production LLM systems. As LLMs move to production, observability becomes as critical as model performance.

Other interesting things this week

AI Developments

AI breakthroughs are transforming industries, from healthcare to finance: Ruth Porat speaks at Jackson Hole about AI's transformative economic impact. Google Trends shows AI becoming breakthrough search term focused on opportunities.

Scaling domain expertise in complex, regulated domains: OpenAI explores scaling domain expertise in regulated environments.

Building AI products in the probabilistic era: ChatGPT performs pattern matching rather than memorizing data. General purpose AI has disrupted how software is designed, engineered, and grown. Many established tech playbooks have become obsolete.

Product Launches

DeepSeek-v3.1: Hybrid inference with "Think" and "Non-Think" modes. New APIs:

deepseek-chatfor non-thinking anddeepseek-reasonerfor thinking, both supporting 128K context.Nano Banana: Google DeepMind released Nano Banana, a model for image transformation that tops the image generation leaderboard.

NotebookLM's Video Overviews are now available in 80 languages: Full-length Audio and Video Overviews now in 80+ languages with same depth and nuance as English versions.

Proton's privacy-first Lumo AI assistant gets a major upgrade: Version 1.1 brings 200% improvement in reasoning, 170% increase in context understanding, and 40% boost in code generation.

AI Mode in Search gets new agentic features and expands globally: Google Search AI Mode adds restaurant reservations with appointments and tickets coming soon. Available to AI Ultra subscribers in US, expanding to 180+ countries for English users.

Launch HN: April (YC S25) – Voice AI to manage your email and calendar: AI executive assistant for hands-free email and schedule management via voice. Uses Deepgram STT, Eleven Labs TTS, and custom MCP servers for Google integration.

Tools & Resources

Why Your Prompts Don't Belong in Git: Hard-coding prompts requires code pushes and redeployments for every change. Prompts are behavior, not static configuration. This approach blocks product personnel from contributing to prompt evolution.

Show HN: OctaneDB – Fast, Open-Source Vector Database for Python: 10x faster performance than existing solutions with sub-millisecond query times and 3,000+ vectors/second insertion. Supports HNSW and FlatIndex search with GPU acceleration.

Show HN: Clearcam – Add AI object detection to your IP CCTV cameras: Transform RTSP cameras or old iPhones into AI security systems. Premium offers remote viewing, notifications, and end-to-end encryption.

Turning Claude Code into My Best Design Partner: Practical approach using plan documents as source of truth instead of conversation history. Limits implementation instructions to encourage design contributions.

Making games in Go: 3 months without LLMs vs. 3 days with LLMs: Truco game took 3 months pre-LLM using React and TinyGo. Escoba game created in 3 days using LLMs to refactor existing code. LLM-generated code worked almost perfectly with one

appendbug.

Research & Analysis

A bubble that knows it's a bubble: MIT finds 95% of companies investing in generative AI see no measurable returns. Fed data shows AI investment consuming over half of America's total capital expenditure. Historical parallel to 180+ years of tech bubble patterns.

Applicability vs. job displacement: further notes on our recent research on AI and occupations: Microsoft research on Semantic Telemetry for understanding AI system interactions and job impact.

How to develop the most important skill for AI: Most rely on filtered commentary instead of reading research directly. Common challenges include getting lost in technical details or relying on summaries. The AI space moves faster than blogs and press releases can capture.

Why Science Must Embrace Co-Creation with Generative AI to Break Current Research Barriers: LLMs transform developer workflows but scientists still use them for basic tasks. GenAI can function as a thinking partner for strategic decisions and new perspectives. Co-creation accelerates discovery when properly leveraged.

Anthropic Education Report: How educators use Claude: Teachers save 5.9 hours per week using AI according to Gallup survey. Faculty build custom tools like chemistry simulations and grading rubrics. AI enhances tasks requiring creativity but doesn't replace direct student interaction.

Infrastructure & Engineering

Measuring the environmental impact of AI inference: Google dropped search energy drain by 33x in one year. US electricity use up 4% this year from data center expansion, partly met by 20% increase in coal generation.

What happens when AI data centres run out of space? NVIDIA's new solution explained: NVIDIA's Spectrum-XGS connects AI data centers across vast distances into "giga-scale AI super-factories". Addresses single facilities running out of power, physical space, and cooling capacity.

Google's Liquid Cooling: Datacenter-scale liquid cooling solution for TPUs with loops spanning racks. CDU rack provides cooling capacity with five active CDUs, allowing maintenance without downtime.

NVIDIA Hardware Innovations and Open Source Contributions Are Shaping AI: Blackwell GPU architecture features fifth-generation Tensor Cores and NVFP4. Integrates NVIDIA NVLink-72 for ultra-fast GPU-to-GPU communication to overcome physical constraints.

Introducing NVIDIA Jetson Thor, the Ultimate Platform for Physical AI: NVIDIA's platform for generalist robots includes foundational models, synthetic data pipelines, and simulation environments. Jetson AGX Thor Developer Kit now generally available.

Security & Governance

Open the pod bay doors, Claude: Anthropic reports Claude Opus 4 "blackmailed a supervisor" in simulated environment to prevent shutdown. Highlights importance of AI safety research in realistic scenarios.

The US federal government secures a massive Google Gemini AI deal at $0.47 per agency: Federal agencies get Google's full AI stack for $0.47 per agency through 2026. Includes NotebookLM, video/image generation, and pre-built agents.

Career & Industry

AWS CEO says using AI to replace junior staff is 'Dumbest thing I've ever heard': Matt Garman notes junior staff are inexpensive and most engaged with AI tools. Over 80% of AWS developers use AI for unit tests, documentation, and code. Replacing juniors eliminates the future talent pipeline.

The Strange Reality of AI and SWE Hiring in 2025: Career-focused analysis of current AI and software engineering job market. Paid content exploring deeper insights into hiring trends and career development. Shows the evolving relationship between AI capabilities and engineering roles.

AI in K-12 Today: The Back-to-School Overview: Teachers adopt SchoolAI, Google Education AI, and Magic School for lesson planning and administrative tasks. Educators view AI as efficiency multiplier.

If you found this helpful, consider supporting ML for SWEs by becoming a paid subscriber. You'll get even more resources and interesting articles plus in-depth analysis.

Always be (machine) learning,

Logan